on June 18, 2021

When I woke up today in the UK, Twitter was alive with jokes, hot takes, and sympathy about an email sent out to millions of folks on a contact list for HBO Max featuring the subject line, “Integration Test Email #1”.

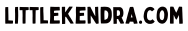

Here’s what the email looked like:

This email went out to more than subscribers – just about everyone with an email address got one. (Except me! I felt left out. My partner got one, so it’s in the family, though.)

What happened?

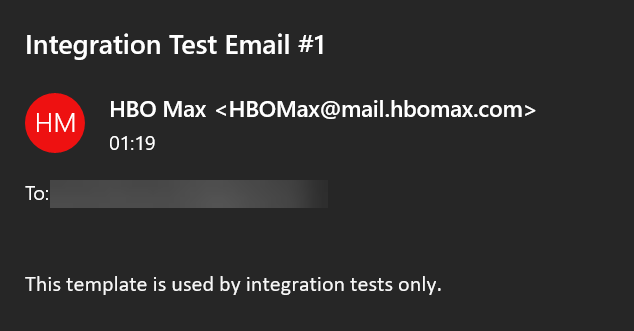

The folks at HBO Max explained a little bit about how so many people were involved in this integration test:

No really, what actually happened?

I give the folks at HBO Max credit for the supportive tone in their tweet. As someone who has had some big “oops!” moments with databases and production systems, I know the feeling of REALLY wanting to turn back time after you realize that you’ve accidentally caused things to go horribly wrong.

But it wasn’t “the intern”.

This only happens to an intern – or even to a Senior Engineer– if there aren’t safety measures in place. We all need safety measures: interns, engineers of all levels, and customers.

Two key pathways to protect

Pathway 1: Restoring production datasets into development environments

How many of us have ever been at a company where we restored the production database to the development environment, and then at one point someone accidentally emailed all the customers?

I have. When I ask others this question at database-related events, lots and lots of folks raise their hands. This is an often-told story.

This happens all the time.

There are reasons why production-like datasets are necessary in dev and test environments. There are also important reasons to redact and/or mask sensitive parts of that data.

Redacting and masking sensitive data protects our staff from sending out “Integration Test Email #1” from the development environment. It also protects our customers. Development datasets are also a prime target for hackers who use phishing techniques to obtain credentials that allow them to harvest valuable data from development environments.

Pathway 2: Safety checks in pipelines

While it’s most likely that this email was caused by a production dataset being used in a development environment, it’s also possible that this was caused by an automated deployment pipeline with few or no safety checks.

Automation can either make things very unsafe, or very safe: it’s all in how your team implements automation.

Making automation pipelines safer doesn’t necessarily mean making them slower. With most automation systems, one can implement a combination of automated tests to catch problems. Also consider checks to estimate change risk– such as the number of emails which would be sent. You may choose to allow low-risk changes to flow more freely through the system, while higher-risk changes require manual review.

What to learn from Integration Test Email #1

The main thing to remember is that this can happen to anyone. We all can be the one to make a mistake.

The key thing is to work towards making things safer, and increasingly making it less likely to make mistakes.

Take the time to:

- Look at whether production data is being restored into development environments without masking of personal and sensitive data. If it is – start up a conversation about fixing that.

- Identify the safety checks in your automated pipelines, and if there are improvements you can initiate here to make deployments safer for everyone.